Best Physical AI Datasets: Training Real-World Models

The main challenge for the development of the physical AI industry is the so-called "data wall", which arises from the impossibility of using standard open sources for full-scale model training. The core problem lies in the fundamental difference between passive observation and active interaction: video can only convey the visual result of an action, but it is completely devoid of information regarding the physics of the process. For physical AI systems to function successfully, it is crucial to know internal movement parameters, such as specific motor torque or the pressure force required to lift a fragile or heavy object.

This deficit of specific information drives a transition from traditional "image-text" formats to the progressive VLA architecture. In such a model, visual perception and language commands are integrated directly with physical actions and real-time sensor feedback. Training real-world models requires the creation of unique datasets where every video frame is synchronized with data regarding the state of the mechanisms and their interaction with the environment. Only such an approach allows AI to go beyond simple pattern recognition and learn to confidently operate physical objects in conditions of high uncertainty.

Quick Take

- The future belongs to vision-language-action models that synchronize vision and language directly with physical commands.

- Direct human teleoperation of a robot provides the highest quality data, though the cost of such collection can reach tens of dollars per minute.

- Virtual environments allow for "living through" hundreds of hours of experience in one real hour, though the "sim-to-real gap" remains a challenge.

- Creating a "universal brain" allows for the transfer of skills between completely different types of robots.

- Data curation and a focus on rare scenarios are more important for safety than millions of identical recordings.

Data Collection Strategies for Robot Training

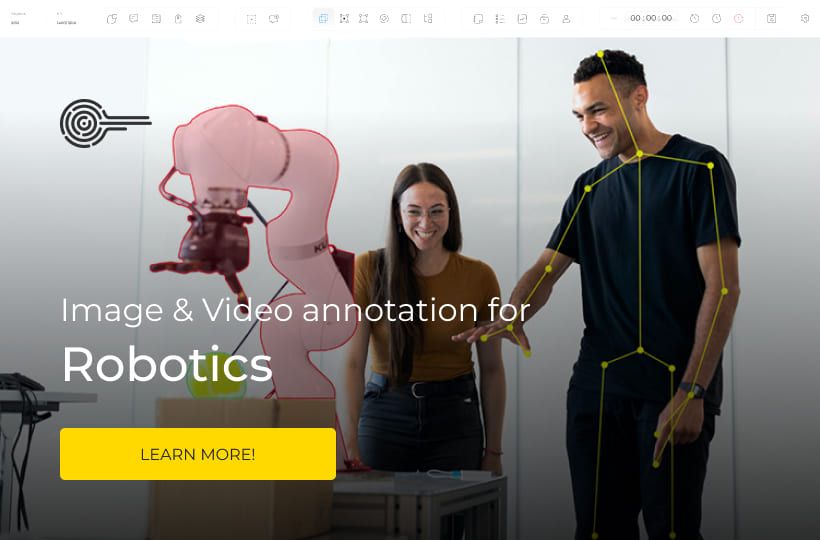

In order for artificial intelligence to confidently control a mechanical body in the real world, it needs access to specific information that combines visual imagery with physical forces. Today, developers use several primary methods to gather high-quality robotics datasets, each with its own advantages and challenges.

Teleoperation – Direct Transfer of Human Experience

This method is considered the "gold standard" of quality, as it allows for the recording of an ideal task execution by a human through the machine's body. The operator uses VR headsets or specialized manipulators to literally "lead the robot by the hand", showing it exactly how to interact with objects. During this process, the system collects extremely valuable multimodal datasets, which include video, the angles of every joint, and the pressure force at every point of the route.

The economics of this approach are quite complex, as a single recording of a successful action can cost tens of dollars per minute of a professional's work. The main value here lies in the high-precision annotation of every moment: the model must understand not just the fact of an object moving, but the logic and effort behind it. Such deep sensor data AI helps teach the system "why" a certain decision was made, which is critically important for safety and stability in real-world conditions.

Digital Twins and Virtual Training

When collecting real data becomes too expensive or dangerous, simulations like NVIDIA Isaac or PyBullet come to the rescue. These are virtual data factories where digital copies of robots can train millions of times in a row without the risk of damaging expensive equipment. The process of training AI robots in such environments happens incredibly fast, as a machine can "live through" hundreds of hours of virtual experience and learn basic movement or balancing skills in a single real hour.

However, the main problem with this method remains the so-called "sim-to-real gap". It is very difficult to configure a virtual world so that its physics completely match real-world surface friction, the play of light, or weight distribution. If this gap is too large, a robot that worked perfectly in the program may turn out to be completely helpless during its first step onto a real office or factory floor.

Learning via Human Visual Demonstrations

This approach is based on the ability of algorithms to observe human actions without direct control of the robot's mechanisms. Instead of "feeling" the movement through teleoperation, the system analyzes video recordings of a human performing work and attempts to transfer that logic to its own mechanics. This is a significantly cheaper way to expand the knowledge base, as it allows for the use of massive amounts of existing video material for pre-training.

To effectively compare learning methods through demonstrations, the following characteristics can be highlighted:

Using such demonstrations allows for a significant acceleration in system development, as the robot gains a general understanding of what a successful task completion looks like. While this method does not provide the same precision as direct teleoperation, it serves as an excellent foundation for further refining skills in the real world or through simulations.

Strategies for Forming Intelligent Datasets

To move beyond simple laboratory tests, modern physical AI requires colossal volumes of information that reflect the complexity of the real world. The main focus of development has shifted from hardware improvement to the creation of massive databases that allow algorithms to understand physics, movement logic, and the consequences of every action.

Universality and Scaling of Cross-Platform Data

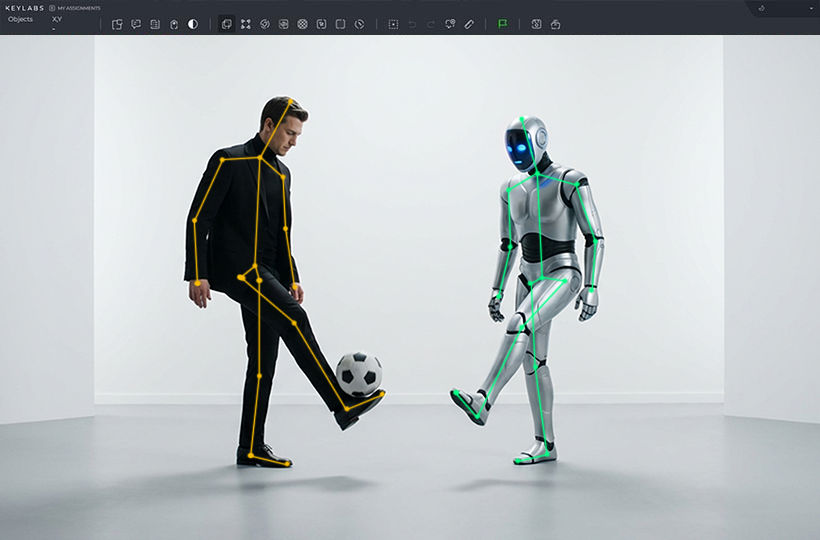

One of the most important stages of development is the creation of so-called foundation models, which are capable of processing information from completely different types of mechanical bodies. Instead of training a separate algorithm for each specific manipulator, developers use multimodal datasets that combine the experience of wheeled platforms, quadruped systems, and humanoids. This allows for the creation of a universal intelligence that understands general principles of space interaction regardless of the specific device's mechanics.

This approach is successfully implemented by companies where the main goal is to create a general "brain" for robotics. By using vast robotics datasets collected from thousands of different scenarios, the model learns to transfer skills from one platform to another. This radically accelerates the training process, as knowledge of how to open a door or bypass an obstacle becomes available to any robot connected to the general system.

High-Precision Capture of Human Experience and Sensorics

The quality of physical model training directly depends on how detailed the parameters of successful task execution by a human are captured. For this purpose, complex recording systems are used that transform every movement of a professional into a digital footprint understandable by a neural network. This allows for the accumulation of sensor data AI, including visual sequences, micro-changes in weight distribution, acceleration speeds, and object gripping forces in real-time.

To create comprehensive knowledge bases, developers typically collect the following types of data:

- Visual streams. High-definition video from multiple angles for in-depth spatial analysis.

- Proprioception. Data on the state of every motor and the joint angles of the robot during movement.

- Tactile feedback. Information regarding pressure and friction arising from contact with objects.

- Force-torque indicators. Precise measurements of efforts applied to overcome material resistance.

Thanks to this detailed approach – actively used by Tesla and Figure – machines learn to imitate natural human kinematics. The availability of this data allows algorithms to understand the physical laws behind every gesture, making robot behavior smooth and safe for the surrounding environment.

Integration of Logical Models and Open Ecosystems

Recently, the merging of physical skills with linguistic logic through large multimodal models has acquired critical importance. This allows for the addition of context understanding and cause-and-effect relationships to dry movement coordinates. Using collaborative projects allows companies to share knowledge and create giant experience libraries that would be inaccessible to individual market players.

When physical movement data is combined with the logic of modern language models, the robot gains the ability to reason. For example, a system begins to understand that a glass should be set down carefully, not just because it is written in the code, but because it is fragile by nature. Such synthesis makes training AI robots much more effective, as it allows machines to follow complex instructions and independently handle non-standard situations based on accumulated collective experience.

Edge Cases and the "Long Tail" of Errors

The problem of errors in a physical environment differs fundamentally from digital glitches due to the risk of real damage or injury. The slightest inaccuracy in an algorithm can lead to broken glass or a collision with a person; therefore, the greatest attention is paid to the so-called "long tail" of rare cases.

High Stakes and the Price of Error in the Real World

In traditional AI development, a model error usually means a wrong recommendation or a typo, which is easily fixed. However, in the field of training AI robots, any wrong action leads to physical consequences, such as damaging expensive equipment or creating a threat to people nearby. This is why training based on standard situations is insufficient; most critical failures occur in non-standard conditions that are rarely found in ordinary training samples.

To ensure safety, developers focus on studying scenarios where the probability of an error is highest. This requires the system's ability to recognize physical object limitations and predict the consequences of its movements before performing them. This approach turns autonomous machines into reliable assistants capable of acting cautiously even when a situation falls outside their primary experience.

Priority of Data Selection Quality Over Quantity

In the physical AI industry, there is a clear rule stating that a thousand perfectly selected examples are far more valuable than a million random recordings. The process of selection, or data curation, becomes a key stage, as it allows for the clearing of robotics datasets of unnecessary noise and focusing on the most informative moments. A large amount of identical data only slows down training and may lead to the model ignoring rare but important details.

Using high-quality multimodal datasets allows the system to find patterns between visual images and physical reactions faster. When developers focus on the accuracy of every labeled frame, they effectively create a reliable foundation for the machine's logical reasoning. This is critically important for scaling the technology, as properly structured data allows the system to adapt more efficiently to completely new environments without the need for full retraining.

The Role of Humans in Identifying and Labeling Complex Scenarios

Annotation experts play a decisive role in identifying events that might confuse an algorithm. They find specific visual traps in recordings that are obvious to a human but invisible to basic computer vision. It is human experience that allows the system to be taught to distinguish context and understand the complex properties of the environment.

Here are examples of critical cases requiring special labeling in sensor data AI:

- Mirrored and glass surfaces. The robot may perceive a reflection as real space or fail to notice a transparent obstacle.

- Liquid on the floor. Spilled water radically changes the friction coefficient, requiring a completely different movement model to maintain balance.

- Variable lighting. Sharp shadows or direct sunlight can blind sensors and distort depth perception.

- Non-standard human behavior. Sudden movements or unusual gestures of those nearby must be correctly interpreted to avoid collisions.

Thanks to this meticulous work by specialists, the model gains knowledge of events that happen rarely but have the greatest impact on safety. This transforms a set of sensors into an intelligent system ready for the unpredictability of the real world.

FAQ

How is the privacy issue resolved during real-world data collection?

To protect privacy, algorithms for automatic blurring of faces and confidential information are used directly during recording. Additionally, a significant portion of training is moved to isolated simulations where personal data is absent by definition.

Is there a single standard format for storing robotics datasets, similar to JPEG for photos?

Currently, the industry is only moving toward standardization, but formats based on ROS protocols are becoming popular. This allows different laboratories to merge their data into giant libraries for training large models.

Does the hardware wear and tear of the robot itself affect the quality of collected data?

Yes, over time, backlash in mechanisms or motor wear can distort sensory data, confusing the model. Therefore, data collection systems must include regular self-calibration to distinguish changes in the environment from the degradation of their own "body".

What happens if the training data was collected only by a right-handed operator?

This will lead to "data shift", where the robot will be ineffective when working with its left hand or in mirrored conditions. To avoid this, datasets are artificially supplemented by mirroring recordings or involving operators with different motor skills.

How does the energy consumption during the training of such models affect the environment?

Training large physical models requires massive computing power, prompting developers to switch to energy-efficient neural network architectures. Optimizing the process through sim-to-real also helps reduce the overall carbon footprint compared to endless real-hardware testing.

How does AI understand that the data in a dataset was erroneous or contained a failed action?

A filtering process is used where every attempt is evaluated by a success criterion. If, at the end of the recording, a glass was broken or the goal was not reached, such data is either discarded or labeled as a "negative example" from which the robot learns what not to do.