Ensuring label fairness and bias reduction in data labeling

Data labeling is a key component of machine learning, providing the algorithm with the training data necessary to properly develop insights. If labels are biased or unfair, they can create inaccurate models that encourage inaccurate conclusions. This can be damaging to both businesses and individuals.

Embracing fairness and reducing bias in data labeling is critical for organizations that leverage machine learning models; yet how do you ensure data labeling is conducted in an unbiased fashion? We will discuss 8 steps that must be followed to ensure fairness and bias reduction when labeling data sets.

In this article, we will explain why label fairness and bias reduction is so important – its effects on machine learning algorithms – as well as what must be done by an organization in order for their data labels to not only be accurate but fairly applied across the board. We’ll also provide tips from experts who’ve been through the process before on how to achieve this goal most efficiently with minimal time investment. Get ready to level up your knowledge of label fairness & elimination of biases!

Understand Your Dataset

When it comes to data labeling, the key is to ensure that data labels are fair and free from biases. To do this, you first need to explore the features in your dataset to identify potential sources of bias. You have to analyze how the different variables in your dataset are correlated and if there any discrepancies between them. This can help you uncover any issues that could cause biased labeling.

Another important step is to use the Responsible AI dashboard's data analysis capabilities for predict and prevent your outcomes. The dashboard's predictive models help in automating the process of label identification by capturing patterns related to the data itself, as well as external factors such as socio-demographic traits, location or language. This helps in reducing future bias by providing insights for better decision making around data labels.

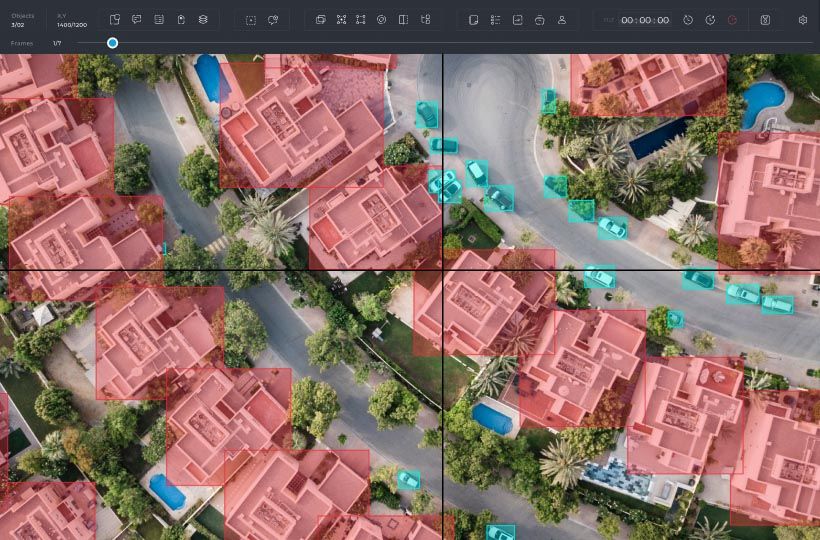

Finally, considering bias detection at scale is no cakewalk. Leverage third-party tools like Labelbox or Saggezza’s Expert Workbench can help detect and mitigate any biases that have crept into labeling datasets overtly or inadvertently. Tools such as these can provide a comprehensive view into where potential biases exist and then help you address them effectively through clustering and collaborative annotation processes.

To ensure label fairness, it is crucial to define your label requirements upfront based on data-driven performance metrics, user feedback, and other relevant criteria to guide all labeling teams in the same direction while minimizing bias throughout the process. Ultimately, this will make sure that all labels are consistent with each other and applicable across different regions, language groups etc., thereby ensuring unbiased labeling results for better decisions making attained from labeled data sets.

Define The Label Requirements

Data labeling is an important part of any data science project. It is the process of annotating certain properties and characteristics of data points which makes it possible to index and search that data with algorithms. Researchers have developed methods to ensure label fairness and bias mitigation, as well as algorithm fairness definitions. Despite advances towards reducing bias in clinical predictions using such methods, there are still gaps because of technical difficulties, high dimensional health data, lack of knowledge on underlying causal structures, and more.

It is important to define label requirements before beginning a data labeling project. Start by outlining the classes to be labeled and the labelers' responsibilities when labeling each dataset. Determine which tasks require manual labeling or automated annotation (and the number of each type for accuracy) as well as how you will measure performance and accuracy (for example, in terms of precision-recall scores). Once you have completed this process for each of your datasets, document your entire policy and procedures in detail so you can ensure uniformity across datasets from different sources over time.

From ensuring label fairness to reducing bias in data labeling projects, it's essential to fully understand the requirements for the task at hand before starting it up. Furthermore, documenting your policies and procedures will help maintain consistency while protecting against any future conflicts or legal issues that could arise from unintentional biases throughout the process.

Document Your Policies And Procedures

The Joint Commission recently released updated guidelines, applicable to Cytogenetics and NBS data collection, in order to ensure a fair and bias-free labeling process. This information was created to replace existing guidelines and offer additional testing guidance and conditions when collecting the data. The Joint Commission also creates laboratory testing documents for stakeholders as well as guidance documents, as this is an important part of their mission: to ensure that there is fairness and equity across the labeling and data collection process.

To ensure that your organization upholds ethical standards, it's important that you document policies and procedures when it comes to collecting data. This means that any discrepancies or changes should be communicated properly with stakeholders, especially if they are related to the fairness of how labels are applied. Moreover, these documents should be up-to-date with policy changes and regulations set forth by the Joint Commission.

It's paramount for organizations to strive for equal representation throughout their data collection processes — this means eliminating any existing unfair representations in both program designs and materials used with public audiences. It also means establishing protocols for handling situations if biased labels occur. As policy makers continue to work towards achieving more equitable representation across different demographics, organizations should also strive for more transparency around their data labeling processes so everyone can benefit from a fair system.

Eliminate Existing Unfair Representations

In any AI or ML-based application, it is important to develop an understanding of how biases might enter the system. To ensure label fairness and reduce bias, it is essential to approach data labeling in a way that balances both advantaged and disadvantaged groups in an effort to accurately represent them. The action taken should also strive to remove bias while preserving all task-relevant information.

In order to achieve this goal, it is important to undertake a more thoughtful review of the source of your data and the associated labels provided by human annotators. All stakeholders should proactively work together to reduce the impact of bias and prevent incorrect or imprecise labeling from occurring. Additionally, one common type of bias found in data analysis is propagating the current state; labeling works as an underpinning perspective in identifying and categorizing properties and characteristics about a data point for algorithms to search for. Lastly, when dealing with labels, it's important to remember that anti-bias education must incorporated as part of the overall strategy within machine learning frameworks for effective results.

Producing high-quality labels requires careful consideration when determining which methods to use. While manual labeling provides maximum accuracy and control over quality, automation can save time and money while improving accuracy with ongoing usage but must be monitored closely so that mistakes don't occur. To minimize any existing unintended representations in labels, decide on the best labeling method depending on your aims and resources available - manual or automated - prior implementation into an ML model.

Determine Your Labeling Methods

When it comes to data labeling, it is important to identify your available resources and prioritize a plan. This means assessing the right method of subjectivity when it comes to defining the categories for which labels should be assigned. Drawing on wisdom from traditional methods can help with establishing your expectations and approach. It is also essential to find the right mix of automation and people, based on the needs and available resources. Consider the balance between automated labeling systems that can cover high volumes quickly, versus manual labeling, which may have greater accuracy but come at a higher cost.

You should also consider using internal subject matter experts who may have an understanding of your dataset that external labelers won't have access to. While exploring standards in place in this domain, take into account both the internal standards you want to establish as well as any external ones you want to abide by such as GDPR or CCPA requirements. Lastly, use measures of agreement amongst multiple labelers/ Annotators to determine label accuracy and consistency, ultimately reducing bias overall.

Data Labeling can be a daunting task for many organizations but there are methods available that can make it easier if implemented properly. Being aware of labeler biases and potential effects on data labeling outcomes can help you ensure label fairness while crafting your label strategy moving forward.

Be Aware Of Labelers Biases

When dealing with data labelling, it is important to be aware of the fact that the human annotators can exhibit bias in their work. Annotations are typically supposed to be consistent across a large set of examples within a given sense and when they do, they become more valuable. However, it is quite common for human labelers to try and agree with the majority consensus or go with what sounds right in order to not "rock the boat". Therefore, it is necessary to take substantial caution when dealing with data labelling and pay attention to specific cases which could be subject to bias or bias mislabelling.

To ensure label accuracy, there are certain measures one can take such as introducing data distributional sampling principles and checks for high quality standard. Furthermore, training human labelers on how to accurately provide inherently biased labels can also help immensely in decreasing the likelihood of mislabels. Finally, using tools such as metrics for evaluation of annotation accuracy could also prove to be useful for identifying cases where labeled examples might have errors or inconsistencies caused by bias.

The importance of ensuring proper quality control during data labelling cannot be overlooked. Being mindful of potential biases while monitoring labels will contribute significantly towards providing fair labels while reducing any potential inaccuracies in labelling work. Therefore, it is important to always check the data annotation quality before attempting a labelling task.

Check Data Annotation Quality

Data labeling is an important step in the data-generating process, as it identifies data points and assigns them appropriate labels. Unfortunately, it can be easy to introduce bias into labeled data due to label bias or annotator bias. Label bias occurs when the data-generating process selectively assigns labels differently for various groups. Annotator bias occurs when the labels provided by different humans vary significantly. To avoid this, machines need to be used in combination with human experts and non-experts. When done correctly, this will ensure that difficult cases are handled by humans, as well as guarantee that the labels assigned to the data points are accurate and unbiased.

In order to ensure that your labeled data is fair and without bias, it is important to audit any labels assigned by machines or humans and revise them if needed. This can be done using a combination of machine learning models as well as expert feedback to identify any possible discrepancies in the labels. By doing this, you can ensure that your labeled data provides useful insights for further analysis of patterns and trends in your dataset.

Auditing and revising labels is essential for reducing label bias and guaranteeing label fairness. By combining AI advances with input from experts and non-experts, companies can label their data at scale while minimising the possibility of introducing unwanted biases into their datasets. With careful inspection and proper management of labeled data sources, organizations can trust that their information is accurate and free from bias.

Audit And Revise Labels

Label bias is a widespread issue in the development of data labels and machines models. It is acknowledged as the primary source inducing discrimination from gender, race and nationality to religion. To minimize it, Fairness Flow focuses on aiding machine learning engineers spot certain potential statistical biases that appear in certain types of AI models and labels. The aim of this tool is to detect if there are any unconfounded selections or outcomes given specific observed labels and data features. Bias occurs when a model systematically over or under estimates the impact for multiple groups.

We must take into consideration both the economic and normative aspects when dealing with label bias. Thus, Fairness Flow offers an effective solution towards addressing the empirically-relevant challenge of selectively labeled data while helping machine learning engineers detect certain forms of statistical bias in AI models and labels. Auditing and revising the label system is essential in order to mitigate data labeling issues effectively.

By utilizing tools like Fairness Flow, organizations have access to powerful insights that can help improve data quality. Through label revision processes supported by such tools, organizations are able to identify potential discriminatory factors in their datasets, reduce bias and create systems that align with their ethical principles.