Managing Large Annotation Projects in the Cloud: Tools and Techniques

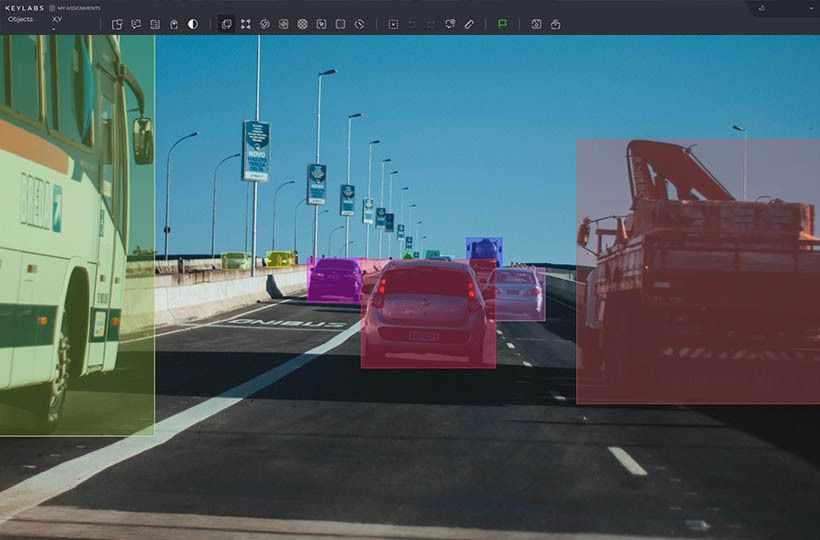

Managing large annotation projects in the cloud has become essential to modern data workflows, especially in machine learning, natural language processing, and computer vision. As datasets grow in size and complexity, the challenges associated with organizing, distributing, and maintaining annotation tasks increase dramatically. Traditional manual approaches quickly become inefficient, leading to bottlenecks in project timelines and inconsistent data quality. Moving to cloud-based solutions provides a scalable infrastructure for handling massive amounts of data, allowing teams to collaborate relatively easily across regions and time zones.

The cloud offers numerous advantages for managing large-scale annotations, but choosing the right tools and frameworks requires careful planning. Key considerations include platform compatibility, integration with existing workflows, data security, tracking progress, and maintaining quality control. Cloud-based tools often have APIs, automation features, and dashboards that simplify project management and reduce overhead.

Key Takeaways

- Cloud-based dataset handling enables efficient management of large annotation projects.

- Precise annotations significantly impact AI model performance.

- A hybrid model of vetted freelancers and in-house teams ensures quality and efficiency.

- Cloud annotation allows for dynamic scaling of projects.

- Meticulous planning and quality checks are essential for large annotation projects.

- Cloud-based tools offer flexible storage and collaboration options.

- GDPR-compliant workflows are crucial for handling sensitive data securely.

Overview of Cloud-Based Dataset Handling

An overview of processing a dataset in the cloud starts with understanding how the cloud changes how data is stored, accessed, and processed. This change allows teams to process datasets of any size, from a few gigabytes to petabytes, with much greater flexibility and reliability. The cloud also supports redundancy, automatic backups, and global availability, ensuring that data remains accessible and secure regardless of the team's physical location.

Working with datasets in the cloud involves more than just uploading files. It requires structured organization, access control, and integration with processing tools. Access policies control who can read, write, or modify the data, providing detailed permissions for different project roles. Cloud-based datasets also often connect directly to services for annotation, machine learning model training, or analytics, reducing the friction between data storage and data use.

Cloud-based dataset processing supports versioning, scalability, and automation - features essential for managing complex and constantly evolving datasets. Versioning ensures that previous iterations of data are preserved, which is critical for reproducibility in research and development. Scalability allows teams to increase computing power or storage on demand without disrupting workflows or requiring new infrastructure. Automation tools like cloud functions or pipelines can pre-process data, validate formats, or run subsequent jobs as new data arrives.

Key Features and Benefits

- Scalability. Cloud platforms can handle datasets of virtually any size, allowing teams to scale storage and computing resources to meet project needs. This elasticity is crucial for managing changing workloads or growing datasets without investing in new physical infrastructure.

- Global availability. Teams working in different locations can access the same data sets in real-time. Cloud-based environments eliminate the limitations of on-premises servers, enabling seamless collaboration regardless of geography or time zone.

- Automation and integration. Many cloud tools support automation through APIs, workflows, and cloud functions. This allows you to optimize and integrate tasks such as data ingestion, pre-processing, and annotation with machine learning pipelines or project management systems.

- Version control and data provenance. Built-in version control features allow users to track data changes, revert to previous states, and keep detailed records of how data has changed. This is vital for reproducibility, auditing, and compliance, especially in regulated industries.

- Real-time collaboration and monitoring. Dashboards and analytics tools allow you to track progress, annotator performance, and dataset quality in real-time. Project managers can quickly identify bottlenecks, flag inconsistencies, and make adjustments on the fly.

Data Security and Privacy in the Cloud

Cloud providers implement robust security systems that include encryption at rest and in transit, multi-factor authentication, and intrusion detection systems. These built-in protections ensure that data cannot be intercepted, tampered with, or accessed without authorization. However, even though the cloud infrastructure is generally secure, users must still carefully configure access controls and permissions to avoid accidental access. Responsibility is shared between the provider and the user, a concept known as the "shared responsibility model."

One of the key strategies to ensure privacy in cloud projects is to implement strict role-based access control (RBAC). This system limits user access to only the data and tools needed for their specific role, reducing the risk of internal and external data breaches. Additionally, many platforms support audit logs that track user activity and access history, helping teams identify unusual behavior or unauthorized activities. Data anonymization or pseudonymization techniques can also be applied before uploading datasets to the cloud, especially in projects involving personal or sensitive information. These measures are significant in areas regulated by regulations such as GDPR, HIPAA, or CCPA.

Cloud platforms provide compliance tools and certifications to support secure and legal data processing across industries and jurisdictions. Organizations can use a compliance-ready infrastructure that meets international standards, reducing the complexity of legal and regulatory obligations. The client can often manage encryption keys, adding another layer of control over sensitive data. Additionally, many providers offer isolated environments or virtual private clouds (VPCs) for projects that require higher security or data permanence guarantees.

Best Practices for Data Protection

One of the most essential practices is enforcing encryption in transit and at rest to protect data from unauthorized access during transfer and storage. Access should be tightly controlled using principles like least privilege, where users are only given the minimum access necessary for their role. Multi-factor authentication (MFA) should also be enabled to reduce the risk of compromised credentials.

Another crucial best practice is implementing comprehensive access logging and auditing. Every action - uploads, downloads, modifications, and permissions changes - should be tracked and reviewed regularly to detect unusual patterns or unauthorized behavior. Automated alerts can be configured to notify administrators of suspicious activity, such as access from unexpected locations or rapid data extraction. Logs help during security incidents and are valuable for compliance with regulations.

Challenges in Cloud-Based Dataset Handling

Without clear strategies and the right tools, issues can slow down workflows, increase costs, and compromise data integrity. Addressing them early is essential for smooth operations, especially in data-intensive projects like large-scale annotation, machine learning model development, or compliance-heavy analytics. Below are some of the most common challenges in cloud-based dataset handling:

- Data Fragmentation and Inconsistency. When data is spread across multiple cloud services, buckets, or storage regions, it becomes challenging to maintain a single source of truth. This fragmentation can lead to inconsistent versions, duplicates, or outdated records that affect downstream processing and model performance.

- Performance Bottlenecks and Latency. Accessing large datasets across regions or through poorly optimized pipelines can introduce latency, slowing down data processing and annotation tasks. This is especially problematic in real-time or high-throughput environments where speed is crucial to productivity.

- Cost Management. Cloud resources operate on a pay-as-you-go model, but storage, computing, and data transfer fees can escalate quickly without careful monitoring. Hidden costs like egress charges or idle virtual machines can add up, making budgeting and resource optimization an ongoing challenge.

Summary

Managing large annotation projects in the cloud requires more than simply moving data to a remote server; it involves orchestrating a dynamic ecosystem of tools, people, and workflows. Success depends on choosing the proper infrastructure, fostering secure collaboration, and continuously adapting to evolving data demands. As teams work with increasingly complex and diverse datasets, the cloud offers the flexibility and power to meet those challenges, but only when paired with thoughtful planning and execution. With the right balance of automation, human oversight, and best practices, cloud-based annotation can become scalable, reliable, and efficient.

FAQ

What are the key advantages of using cloud storage for annotation projects?

Cloud storage offers scalability, allowing easy adjustments to project size. It also provides flexibility to handle varying workloads.

What are the best practices for ensuring data security in cloud-based annotation projects?

To ensure data security, implement robust encryption techniques and establish strict access control measures. Use data isolation strategies to protect sensitive information.

What are the cost considerations for implementing cloud-based annotation solutions?

Cost considerations include cloud storage fees, data transfer costs, computational resources for processing, and licensing fees for annotation tools.

How do cloud-based tools improve collaboration in annotation projects?

Cloud platforms allow multiple users to work on the same dataset in real-time, with shared dashboards and role-based permissions that streamline communication and task management.

Do cloud annotation tools integrate with machine learning workflows?

Many tools provide APIs and workflow automation features that connect directly with model training environments, supporting continuous data labeling and model improvement.

What challenges arise when managing annotator performance in the cloud?

Tracking quality and consistency can be difficult at scale, but built-in analytics, review systems, and consensus mechanisms help maintain standards across large teams.

How is regulatory compliance maintained across different regions in the cloud?

Choosing providers with region-specific storage options and certified infrastructure helps meet local legal requirements, while privacy management tools support user rights and consent tracking.