Temporal Consistency in Video Annotation

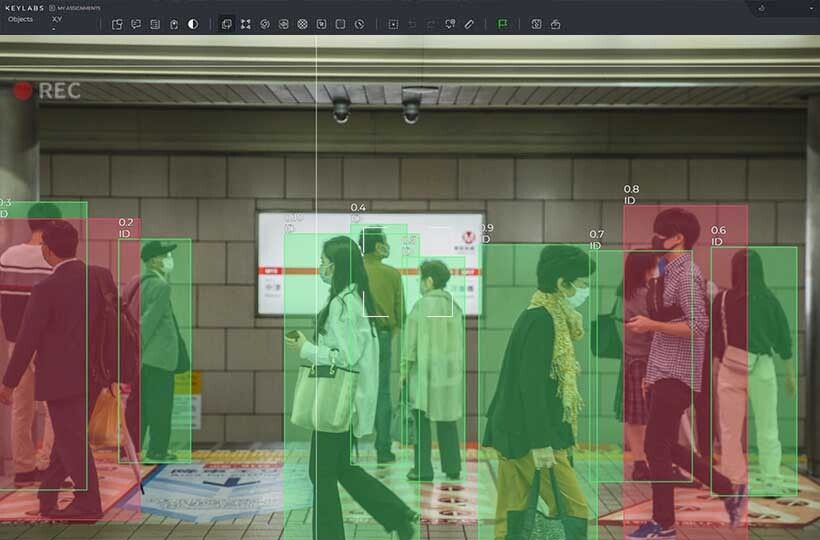

Modern computer vision models are trained to recognize not only the shape of objects but also their trajectories, acceleration, and patterns of interaction with the environment. For such algorithms to function correctly, each frame must be logically connected to the previous one, creating a cohesive story of movements. Even minor deviations in the position of an object's bounding box between adjacent frames are perceived by the model as chaotic jumps. This prevents the system from understanding the true speed and direction of movement.

If an object does not have a persistent identifier throughout the entire video, the neural network is unable to track its path. Unstable labeling forces algorithms to generate erratic predictions, leading to false positives and unstable device performance in real-time. Therefore, significant attention is paid to the smoothness of transitions between frames, which transforms a static dataset into a dynamic flow of knowledge necessary for predicting future events based on current motion.

Quick Take

- High-quality annotation requires stable frames, persistent object IDs, and consistency in their classes.

- The use of mathematical algorithms to connect keyframes eliminates human errors and accelerates work several times.

- For objective assessment, MOTA (tracking accuracy) and MOTP (positioning precision) metrics are used.

- The process includes marking reference points, automatic trajectory building, and multi-level validation for complex scenarios.

Basics of Stability in Video

Working with video requires a special approach because artificial intelligence perceives the world not through individual images, but through a continuous stream of events. If the data in this sequence contradicts itself, the system loses orientation and makes errors in calculations. Understanding how to ensure the stability of each element is the first step toward creating reliable algorithms capable of predicting the future.

The Concept of Temporal Coherence in Labeling

In the world of video, quality work begins when each frame logically continues the previous one. Temporal coherence means that all labeled objects move smoothly and maintain their properties throughout the entire clip. If we watch a labeled video, we should not see sharp jumps or changes that contradict the laws of physics.

To achieve high video quality during annotation, specialists monitor the following parameters:

- Stability of bounding boxes. Frames around objects should fit them tightly and not change their size without a visible reason.

- Persistence of object IDs. Each car or pedestrian receives its own number that does not change from the beginning to the end of the video.

- Consistency of classes. An object cannot suddenly turn from a truck into a bus in the middle of a trip.

- Smoothness of segmentation. Colored object masks must change their shape uniformly in accordance with pixel movement.

Where Difficulties Arise During Work

The sequence annotation process often encounters problems that prevent the model from learning correctly. The most important aspect here is frame-to-frame consistency, as any break in logic is perceived by artificial intelligence as an error. Most difficulties arise due to the complexity of the video itself or the human factor during manual verification of each frame.

These errors make the data unsuitable for training complex systems because they teach the neural network to react to non-existent movements and changes.

Metrics for Assessing Temporal Stability

To understand how well the work on a video has been performed, there are special mathematical indicators that help accurately assess how stably the frames hold and whether objects are lost during movement. These metrics allow for turning a subjective impression into concrete quality figures required by clients of complex artificial intelligence systems.

Identification Error Indicators and Frame Changes

The most important indicator in video is the system's ability to continuously monitor each object. If an object's number suddenly changes, it is considered a serious defect, measured via the ID switch rate. This metric shows how often identifiers "jump" between different targets, allowing for an assessment of the reliability of trajectory tracking from the beginning to the end of the clip.

Developers also use the temporal IoU (Intersection over Union) indicator, which compares the overlap of frames on adjacent frames. If an object moves naturally, the overlap area of its contours in the video should change smoothly without sharp fluctuations. Measuring frame-to-frame variance helps find exactly those moments where the annotation frame vibrates too much, indicating low work quality or a technical failure in the interpolation system.

Comprehensive Object Tracking Metrics

In large projects, entire systems of indicators are used for quality assessment, combining recognition accuracy and smoothness of motion. The most famous among them are the MOTA and MOTP metrics, which provide a full picture of how the tracking system works on large datasets. They allow for seeing the overall percentage of errors, including missed objects and false positives.

- MOTA (Multiple Object Tracking Accuracy) – overall accuracy that accounts for all cases where the system lost an object or made an identification error.

- MOTP (Multiple Object Tracking Precision) – positioning precision that shows how accurately the frame matches the real boundaries of the object in space.

- Number of Trajectory Breaks – an indicator of how many times a continuous line of object movement was interrupted due to technical errors.

- Average ID Persistence Time – the duration the system is able to track an object without a single error in its number.

Using such metrics makes the data verification process objective and allows teams to clearly see exactly where algorithms or annotator work needs improvement to achieve an ideal result.

Interpolation Technology

Interpolation is the magic of mathematics that allows for not wasting time on thousands of repetitive frames. Instead of drawing a frame on every fraction of a second, the annotator creates only reference points, and the program takes over the calculation of the trajectory. This not only speeds up the work but also ensures a smoothness of lines that is physically impossible to achieve manually.

How Linear and Non-Linear Interpolation Works

The process is based on the use of keyframes, where the annotator fixes the exact position of the object. If a car moves on a straight road at a constant speed, the program uses linear interpolation to move the frame uniformly between two points. This guarantees perfect frame-to-frame consistency, as the frame moves along a mathematically straight line without any jitter.

However, in more complex situations, such as during turns or sharp braking, non-linear interpolation is applied. It accounts for acceleration and changes in tilt angle, creating a curved movement trajectory. This approach allows for maintaining high video quality even in dynamic scenes where the object constantly changes its pace or direction.

The Role of Algorithms in Contour Prediction

Modern interpolation is becoming even smarter through the use of computer vision. Advanced sequence annotation tools don't just move a frame in a straight line; they analyze the movement of pixels around the object. This helps automatically adjust the size and shape of the annotation if the object approaches the camera or turns to a different side.

Thanks to the use of smart interpolation, the following benefits are achieved:

- Time Savings. Dataset development happens several times faster than with manual processing of every frame.

- Mathematical Precision. Absence of micro-vibrations in frames that usually occur due to human hand fatigue.

- Identity Preservation. The object is guaranteed to remain with the same ID throughout the entire interpolation segment.

- Ease of Correction. If the object's path changes, it is enough to move one key point to automatically update the trajectory across dozens of frames.

Interpolation turns routine work into an intellectual process of data flow management, where the human acts as the architect of trajectories, and the machine ensures the technical perfection of every frame.

Stages of Creating Stable Video Labeling

The first step in the work is the primary labeling of keyframes. The annotator selects only the most important moments of the object's movement, such as the start and end of a maneuver or a change in direction. After this, the interpolation process is launched, where special software automatically connects these points, drawing a smooth path for the object on all intermediate frames. This provides initial temporal coherence without the need to manually process every fraction of a second of video.

Quality Control and Final Validation

Once the automatic trajectory is ready, the stage of checking temporal consistency begins. Specialists review the video at high speed to notice any deviations, jitter, or "drift" of frames that the algorithm might have missed. At this stage, all minor errors are corrected to ensure perfect compliance with annotation guidelines and project requirements.

To confirm high video quality, the process concludes with the following steps:

- Metric Validation. Checking with automated tools for ID breaks, sharp coordinate jumps, or object class errors.

- Audit of Complex Cases. A separate review of scenes with bad weather, night lighting, or occlusions, where the probability of error is highest.

- Final Approval. Preparation of an accuracy report, including MOTA and MOTP indicators to confirm the dataset's readiness for training.

This systemic workflow guarantees that every second of video will be as useful as possible for the model, and any technical risks will be eliminated before the neural network training phase begins.

FAQ

How often should keyframes be placed for ideal interpolation?

The frequency depends on the complexity of the movement: for uniform motion, one frame per second is sufficient, but for sharp turns. This allows the algorithm to accurately reproduce the trajectory without deviating from the real object.

What to do if an object in the video is obscured by another?

The annotator must continue to track the object using interpolation even if it is temporarily invisible, maintaining the same ID. This teaches the model to understand that the object has not disappeared but is simply behind an obstacle.

How does video resolution affect temporal stability?

Higher resolution allows for more accurate determination of object boundaries, which reduces frame "jitter". This facilitates the work of automatic tracking algorithms, making the data cleaner for AI.

Why is an "ID Swap" between two cars dangerous?

If two cars swap numbers after crossing paths, the model will learn to incorrectly predict their future trajectories. This can lead to critical errors in motion planning for autonomous transport.

How to combat frame "drift" during long interpolation?

Drift occurs due to the accumulation of small errors, so every 20–30 frames, the annotator should conduct a visual check. Adding one additional keyframe in the middle usually completely corrects the offset.

What role does optical flow play in video labeling?

This technology analyzes the movement of individual pixels and helps automatically adjust the frame to the real speed of the object. This allows for achieving much higher precision than ordinary linear interpolation.

How to validate data if the video is shot at 60 FPS?

At high frame rates, checking every moment manually is impossible, so an automatic audit for sharp coordinate jumps is used. Experts review only those sections where the system detected anomalous changes in frame size or position.

Does temporal coherence help reduce "noise" in perception models?

Yes, stable data teaches the model to ignore random artifacts and focus on logical movement. This makes the AI's output predictions smoother and more reliable for real-time use.

How is labeling quality regulated for noisy night scenes?

For night videos, wider tolerances for boundary precision are established, but ID persistence requirements remain unchanged. Annotators use brightness filters to see contours better and maintain frame connectivity.