Top physical AI tools and frameworks for developers

With physical AI now being used in autonomous robots for industrial automation, developers now need tools that bridge the gap between software intelligence and physical interaction.

So we’ll take a look at the best robotics frameworks, AI modeling tools, and AI toolkits. And how to choose the right stack to build scalable, production-ready systems.

Quick Take

- Physical AI combines AI models with real-world interactions.

- ROS AI and ROS 2 are the main robotics frameworks.

- Modeling tools reduce costs and increase safety.

- AI toolkits provide perception and control.

- The right stack depends on scale, use case, and deployment needs.

What is physical AI, and why is it important

Physical AI is the interaction of artificial intelligence with the physical world. These systems must process data in real time, make decisions, and act using hardware.

This creates a set of challenges:

- Real-time processing and latency constraints.

- Fusion of sensor data (vision, LiDAR, audio).

- Safe interaction with dynamic environments.

This is a sign of the need for a robust robotics framework and simulation environment.

Key categories of physical AI tools

Before comparing specific tools, it’s important to understand the ecosystem. Most physical AI stacks are built from three main components:

1. Robotics frameworks provide the foundation for developing robot software, communicating between components, and abstracting hardware.

2. AI simulation tools allow you to test and train models in virtual environments before deploying them in the real world.

3. Robotics AI toolkits include machine learning-based perception, planning, and control modules.

Together, these components form a complete development pipeline that extends from development to enterprise deployment.

Best frameworks for robotics

Robotics frameworks are the foundation of a physical AI system. They define how components interact, process data, and interact with hardware.

ROS (Robot Operating System)

ROS AI is a standard for robotics development. It provides a flexible architecture for building complex robotic systems.

Pros:

- Modular architecture with reusable nodes.

- Large open source ecosystem.

- Strong community and documentation.

ROS is used in research and manufacturing, such as autonomous robotics and industrial automation.

ROS 2

ROS 2 is the next-generation version designed for enterprise deployment and real-time systems.

Pros:

- Improved security and scalability.

- Supports real-time communication.

- Better support for distributed systems.

If you are building production-grade systems, ROS 2 is the better choice.

NVIDIA Isaac SDK

A robotics platform optimized for AI-powered robots.

Suitable for:

- GPU-accelerated robotics.

- Deep learning integration.

- High-performance modeling + deployment.

Simulation tools for AI development

Simulation helps reduce costs and increase safety. Instead of testing on hardware, you can validate models in controlled environments.

These simulation tools AI developers rely on help you:

- Train models faster.

- Test edge cases safely.

- Reduce hardware dependency.

AI toolkits for robotics

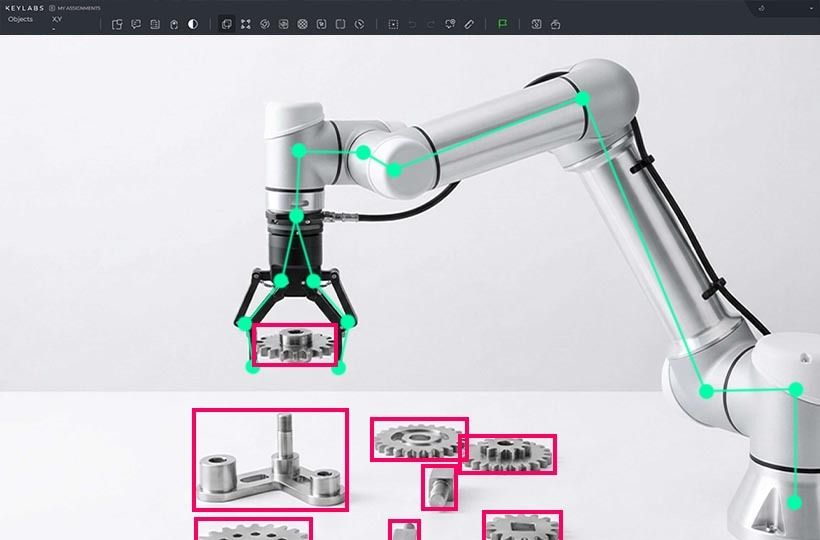

AI toolkits enable perception, decision-making, and control by transforming raw sensor data into actionable insights. Without this layer, robotics frameworks cannot effectively operate in real-world environments.

In practice, developers combine multiple tools depending on the task. For example, computer vision is often handled by OpenCV, which is used to detect and track objects.

For deeper learning tasks, such as perceptual models, the TensorFlow and PyTorch frameworks provide the flexibility needed to train and deploy neural networks.

When it comes to movement and interaction with the physical world, tools like MoveIt enable you to plan robotic-arm movements. And platforms like NVIDIA DeepStream support real-time video analytics, which is important for surveillance, autonomous navigation, and industrial automation.

Together, these AI toolkits enable the integration of machine learning into robotic assembly lines, making the systems adaptive and production-ready.

How to choose the right stack

Choosing the right physical AI stack should depend on the application type, system complexity, and available infrastructure.

If you are working on early-stage research or prototyping, a combination of ROS, Gazebo, and OpenCV is sufficient. This configuration provides flexibility and rapid iteration without high infrastructure requirements.

For production-grade robotic systems, ROS 2 is required alongside platforms such as NVIDIA Isaac and deep learning frameworks like PyTorch. This stack supports real-time performance, distributed systems, and enterprise-level deployment scenarios.

For small, lightweight projects, simple configurations like Webot, combined with basic machine learning libraries, are sufficient. These environments reduce complexity, allowing you to test basic ideas and validate concepts.

Common challenges in developing physical AI

Even with the right tools, physical AI systems are inherently complex. The challenge lies in ensuring they work together seamlessly in dynamic real-world environments.

FAQ

What are robotics frameworks, and why are they important?

Robotics frameworks provide the foundation for building and controlling robotic systems. They handle component communication, hardware abstraction, and real-time processing.

What is ROS AI, and how is it used?

ROS AI refers to the use of ROS (Robot Operating System) with AI models. It allows developers to integrate perception, planning, and control into robotic systems using a modular architecture.

Why are simulation tools important in AI development?

Simulation tools allow you to test models in virtual environments before deploying them in the real world. This reduces costs, increases safety, and helps identify edge cases early in the development process.

What are AI toolkits for robotics?

AI toolkits include frameworks and libraries used for perception, motion planning, and decision-making. They help integrate machine learning into robotics pipelines.

Which stack is best for enterprise deployment?

For enterprise deployment, they use ROS 2 with scalable infrastructure (Docker/Kubernetes) and integrate it with modeling tools and machine learning frameworks like PyTorch.

What is the biggest challenge in developing physical AI?

The biggest challenge is integrating hardware, software, and AI models into a system that operates reliably in real time.