Finding the Best Object Recognition Training Datasets

Imagine a world where machines can discern and identify objects with the same acuity as the human eye. The key to this remarkable ability lies in the 14 million images of the ImageNet dataset, meticulously annotated across a staggering 20,000 categories. This vast wellspring of data isn't just an academic curiosity; it's the lifeline for evolving machine learning models and the bedrock for crucial advancements in object recognition training datasets.

In the sprawling ecosystem of computer vision datasets, not all data repositories are created equal. Real-world data is often hard to find and requires careful vetting by professional teams such as Keymakr.

The pursuit of artificial intelligence that can navigate the complexities of the visual world hinges on deep learning datasets, which are becoming increasingly intricate and nuanced. With a wealth of benchmarks and papers tethered to their existence, these datasets are not static entities but thriving communities of knowledge exchange and development. As the demand for precise and intelligent object detection surges, the quest for the finest object recognition training datasets becomes an imperative mission for developers and researchers alike.

Key Takeaways

- ImageNet's immense dataset serves as a benchmark for developing machine learning models.

- COCO's detailed annotations are pivotal for object context in machine learning challenges.

- Quality and quantity of annotated data from various sources are essential in diverse applications.

- Computer vision datasets like PASCAL VOC help standardize object category recognition.

- Deep learning datasets enable the technology behind innovative industries like autonomous driving.

- Consistent updates in benchmarks and research papers keep object recognition training datasets versatile and effective.

Understanding Object Recognition in Computer Vision

Central to developing effective object detection models is the necessity for object recognition training datasets. These are expansive collections of images that have been accurately annotated to reflect a multitude of object types that models may encounter in real-world scenarios. Such datasets are not only robust but also diverse, ensuring that the computer vision algorithms trained on them can operate effectively across different environments and conditions.

For instance, image detection datasets like ImageNet provide over a million labeled images across various categories which are instrumental in training algorithms for precise object identification. Similarly, computer vision datasets have evolved to include advanced features such as real-time detection capabilities evident in YOLO (You Only Look Once) models, which are known for their speed and accuracy in identifying and classifying multiple objects in a single image frame.

The training processes involved often utilize deep learning models, which require substantial data to learn from. This learning process includes not only recognizing an object within an image but also accurately predicting their size and location within the image—capabilities that are crucial in many practical applications from security surveillance systems to interactive augmented reality experiences.

Enhancing these models' accuracy involves not just the quantity but the quality of the computer vision datasets. Techniques such as Scale-Invariant Feature Transform (SIFT) and Histogram of Oriented Gradients (HOG) are utilized for feature extraction, which are critical in training models to discern and interpret different objects and their variations under various conditions such as different lighting, angles, or obscured views.

The sophistication of object recognition technology in computer vision continues to grow, driven by the development and enhancement of training datasets. As these datasets expand both in size and quality, we can expect further advancements in this technology which will undoubtedly open new avenues for innovation and application in everyday technology.

Why Diverse and Accurate Object Recognition Training Datasets are Essential

The importance of annotated datasets, supervised learning datasets, and labeled datasets cannot be overstated in the field of computer vision and machine learning. These resources are fundamental to developing robust models capable of performing tasks such as image classification and object detection with high precision.

The Role of Datasets in Computer Vision Model Accuracy

For a machine learning model to accurately identify and classify objects, it relies heavily on the quality and diversity of image classification datasets. Such datasets provide real-world conditions that help algorithms understand and interpret various scenes effectively, directly influencing the success of applications like autonomous driving and facial recognition systems.

Benefits of a Rich and Varied Image Data Pool

Deep learning datasets that feature a variety of objects and scenarios are crucial for enhancing algorithm performance. The additional complexity and variance in these datasets train models to be adaptive, decreasing the chance of errors in uncontrolled environments, and increasing their practical applicability across different sectors.

| Dataset | Images | Object Categories | Special Annotations |

|---|---|---|---|

| COCO | Over 200,000 | 80 | Labeled, Bounding Boxes |

| Pascal VOC | Varies | 20 | Bounding Boxes, Segmentation Masks |

| ImageNet | Over 1.2 million | 1,000 | Bounding Boxes |

| Open Images | Over 9 million | N/A | Object-Level Annotations, Bounding Boxes |

| KITTI | Varies | Specific to autonomous driving | Images, Lidar, Annotations for Cars, Pedestrians |

Exploring Publicly Available Object Recognition Training Datasets

The field of machine learning and object detection continues to evolve with the rapid development and accessibility of public datasets for object detection. Among these, some stand out due to their size, diversity, and the range of annotations they offer. Here we delve into notable datasets that have significantly contributed to advancements in image classification datasets.

First, the ImageNet dataset, which is a cornerstone in the realm of machine learning datasets, contains over 14 million images spread across more than 20,000 categories. This vast variety makes it an invaluable resource for training and refining machine learning algorithms. Secondly, the COCO dataset (Common Objects in Context) features 330,000 images with 1.5 million object instances annotated across 91 categories, making it a rich source for training models to understand objects in varied contexts and environments.

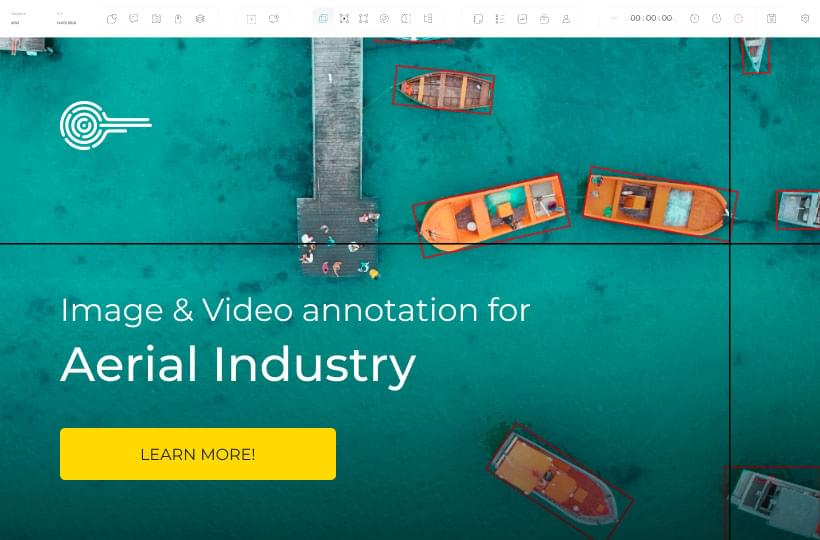

Further enhancing the landscape of public datasets for object detection are collections like the PASCAL VOC, offering images categorized in 20 significant groups, and the DOTA dataset, which presents challenges in aerial object detection with its 11,268 images and 1.8 million object instances. Each dataset, including others like the Berkeley Deep Drive (BDD100K), Visual Genome, and NuScenes, serves a unique purpose in training algorithms to accurately and effectively recognize objects under different conditions and scenarios.

The availability of such comprehensive public datasets allows for a broader development of more robust machine learning models, which are crucial for advancing not just in theoretical research, but practical applications across various sectors including automotive, security, and urban planning.

Characteristics of High-Quality Machine Learning Datasets

In the realm of artificial intelligence, the phrase "data is the new oil" couldn’t be more accurate. The efficacy of machine learning models is profoundly determined by the quality of their training data. For AI projects involving computer vision datasets and image detection datasets, meticulous precision in annotating and sourcing data is imperative.

Annotating Images for Precision in Object Detection

Annotation is the bedrock of effective object detection within deep learning datasets. In practice, this involves accurately defining various aspects of an object within a dataset. Technicians handle annotated datasets by labeling features with bounding boxes, segmentation masks, or keypoints that help delineate the object’s shape, size, and spatial location in the imagery. This detailed annotation is vital for the system to learn from the deep learning datasets and perform with high accuracy and precision. Platforms like Keylabs are built specifically for that.

Sourcing Datasets with Reliable Licensing and Citations

Equally critical is the acquisition of licensed datasets. Ensuring that datasets are not only rich in quality but also backed by proper licensing is vital for legal and ethical utilization. These licensed datasets come with explicit terms of use, whether for commercial gains or academic purposes. They're harder to come by and often require robust networks of partnerships.

The computer vision datasets thus require not just quantity but a calibrated layer of quality and legal frameworks. Balancing these elements, we ensure our models not only perform exceptionally but also operate within the bounds of ethical AI practices. As the field evolves, these practices are becoming the standard, ensuring the AI systems we develop are both powerful and trustworthy.

Object Detection in Practice: Analyzing Real-World Applications

The integration of computer vision datasets into real-world object detection systems has markedly transformed various sectors, enhancing operational efficiencies and innovation. These systems are intricate assemblies where machine learning applications meet practical usability, heavily relying on advanced models fine-tuned with comprehensive data. The real-world application spans broadly from healthcare where they enable early diagnosis of diseases, to retail environments that utilize surveillance for enhanced security and customer behavior analysis.

In healthcare, machine learning applications using computer vision datasets have proven instrumental. They assist in detecting abnormalities such as tumors at a much earlier stage, potentially increasing patient survival rates. Furthermore, in retail, AI-driven systems analyze customer interactions and monitor inventory levels, helping stores optimize their operations and improve customer satisfaction.

Real-world object detection is also pivotal in enhancing security measures. Airports and public spaces employ sophisticated models that detect suspicious behaviors, contributing to safer environments. This application of machine learning applications extends to the automotive industry, where object detection aids in developing autonomous driving technologies, improving road safety by recognizing pedestrians, obstacles, and traffic signs swiftly and accurately.

Each of these applications underscores the critical role of high-quality computer vision datasets. The data's depth and breadth ensure that the models perform effectively across diverse conditions and settings, which is paramount in deploying these technologies on a large scale. The continuous advancement in real-world object detection further facilitates the expansion into new areas, solidifying the presence and importance of machine learning applications in everyday technology.

Object Recognition Training Datasets for Autonomous Driving Technologies

The evolution of autonomous driving technologies deeply relies on the quality and comprehensiveness of object recognition training datasets. Among the most pivotal in this domain are BDD100K, nuScenes, and the KITTI Vision Benchmark Suite, each offering unique data compositions that cater to the complex needs of autonomous systems.

Datasets Catering to Multisensor Data: nuScenes and BDD100K

Multi-sensor datasets like nuScenes and BDD100K are essential for developing versatile and robust autonomous driving systems. nuScenes, with its rich compilation of over 1.4 million images and extensive lidar sweeps from urban landscapes of Boston and Singapore, provides a comprehensive autonomous driving dataset. Similarly, BDD100K encapsulates diverse driving conditions through 100,000 videos labeled for various road scenarios, significantly aiding in the training of AI to understand and navigate complex environments effectively.

Leveraging Labeled Datasets: The KITTI Vision Benchmark Suite

The KITTI Vision Benchmark Suite stands as a cornerstone for autonomous driving research, particularly in object detection, enabling the development of advanced driver-assistance systems. It offers a plethora of real-world driving data, including nearly 39,000 meticulously labeled images that facilitate the detailed study and improvement of autonomous driving algorithms.

The integral role of such labeled datasets can’t be overstressed, as they allow for the detailed annotation of various road elements, from pedestrians to traffic signs, every piece of data contributes significantly to enhancing the accuracy and reliability of autonomous driving technologies.

| Dataset | Images | Labeled Elements | Use Case in Autonomous Driving |

|---|---|---|---|

| BDD100K | 100,000 videos | Road objects, lane markings | Training models for diverse road conditions |

| nuScenes | 1.4 million images | Objects, bounding boxes, lidar sweeps | Sensor fusion models for object detection |

| KITTI Vision Benchmark Suite | Nearly 39,000 images | Cars, pedestrians, traffic signs | Benchmarking algorithms for realtime environments |

Emerging Trends in Object Recognition Dataset Development

The landscape of object recognition is constantly evolving, with new methodologies enhancing how these datasets are developed and utilized, particularly in fields such as AI edge computing and metadata management.

Incorporating Annotated Datasets for AI Edge Computing

AI edge computing represents a significant shift in data processing, allowing computations to be performed at the edge of the network, closer to the data source. This is particularly relevant in computer vision datasets where the immediacy of data processing can be critical. For instance, annotated datasets used in AI edge computing enable real-time object recognition which is crucial for applications like autonomous vehicles and smart surveillance systems. The integration of richly annotated data ensures that the models developed are not only fast but also accurate and reliable in various environments.

The Importance of Metadata in Image Detection Datasets

Metadata plays a pivotal role in maximizing the efficacy of image detection datasets. Detailed metadata includes information such as the time of capture, geographical location, and environmental conditions, all of which provide additional layers of context for object recognition tasks. This rich metadata helps to refine AI models, ensuring they are adaptable and capable of functioning accurately under diverse conditions. Enhanced metadata schemas contribute significantly to the advancements in how datasets support the training of sophisticated object recognition models.

The synergy between high-quality annotations and comprehensive metadata is transforming computer vision datasets, making them more potent for real-world applications where conditions can vary drastically. By embracing these trends, the field is moving towards more robust, dynamic, and context-aware systems in object recognition, securing its place at the forefront of AI innovation.

Summary

The landscape of computer vision has been fundamentally transformed by the development of extensive object recognition training datasets. As we have explored, datasets such as BDD100K, with its staggering 100k high-definition videos, and the diversified BOLD Dataset, with 1,348 videos, are profound contributors to the advancement of machine learning models. These collections provide an indispensable variety of data points across geographies, environmental conditions, and a multitude of use cases. Specifically, in the realm of autonomous driving technologies, the embodiment of robust datasets is critical; they foster the creation of systems capable of understanding and navigating the complexities of real-world driving.

Data intricacy continues to expand, with drones and first-person interactions captured through sets like DroneCrowd and EgoHands. They serve to heighten the understanding of computer vision systems amidst dynamic settings. This, coupled with the purpose-built Objectron Dataset or the meticulously annotated frames in the YouTube-BoundingBoxes dataset, signifies a trend towards rich, dimensional, and contextually nuanced data. Furthermore, the meticulous research and innovative approaches in skin lesion detection, with exceptional accuracies on vital medical datasets, emphasize the caliber of advancements underpinned by these profound data resources.

FAQ

What are object recognition training datasets?

Object recognition training datasets are collections of labeled images used to train machine learning algorithms in identifying and detecting objects within visual media. They contain various annotated examples that enable algorithms to learn from and adapt to diverse visual scenarios.

Why is diversity in object recognition training datasets important?

Diversity in these datasets ensures that object recognition models are exposed to a wide range of scenarios and object variations. This improves the models' ability to generalize and accurately recognize objects in different and unforeseen environments.

What are some of the leading public datasets for object detection?

Leading public datasets include ImageNet, Microsoft COCO, PASCAL VOC, Berkeley Deep Drive (BDD100K), Visual Genome, nuScenes, and DOTA. Each offers a unique set of images and annotations to aid in the development and benchmarking of machine learning models.

How do annotations enhance object detection datasets?

Annotations such as bounding boxes, segmentation masks, and keypoints define the relevant features of objects in images. They are crucial for training accurate object detection models by teaching them exactly where and what the objects are in a variety of contexts.

What should be considered when choosing an object recognition training dataset?

Consider the dataset's annotations quality, diversity of images, relevance to the specific use case, and legal aspects such as licensing and proper citations. Using datasets with well-defined licenses and citations ensures ethical usage and contribution acknowledgment.

Why is the ImageNet dataset significant for object recognition?

ImageNet is significant for object recognition due to its extensive hierarchy of over 14 million labeled images across 20,000 categories. It played a key role in advancing machine learning techniques and models like AlexNet in the computer vision community.

How are object recognition training datasets used in real-world applications?

In real-world applications, these datasets train machine learning models deployed in various industries for tasks such as product scanning in retail, patient diagnostics in healthcare, security surveillance, and pathfinding in autonomous vehicles.

What specific datasets are used for autonomous driving technology?

Datasets such as BDD100K, nuScenes, and the KITTI Vision Benchmark Suite are specifically designed for autonomous driving technology. They provide images with annotations relevant to driving scenarios, including various sensors and environmental metadata.

How do emerging trends in dataset development affect object recognition?

Emerging trends like AI edge computing and the inclusion of metadata in datasets are driving the development of more efficient object recognition technologies that process data in real-time, close to the source of data acquisition, and with greater contextual understanding.