Preference annotation for LLM alignment

Large language models need clear guidance to become helpful assistants. This process collects specific feedback that reflects what people actually want.

A practical method for this task is to present two options side by side. It is easier for people to choose between two items than to evaluate everything at once. This approach provides reliable data for training AI systems.

The same research methods used in surveys and decision-making studies are used to align advanced AI models. When people compare responses, they provide valuable information about quality and usefulness. This feedback trains reward systems that guide language models toward better performance.

This structured feedback collection process is commonly referred to as RLHF annotation, where human evaluators compare model outputs to guide alignment. It forms the basis of reinforcement learning techniques that make systems responsive.

Quick Take

- This research method provides more consistent human feedback for training AI.

- The technique supports reinforcement learning to align language models.

- Pairwise data collection mimics natural human decision-making processes.

- Understanding these methods helps create more responsive AI systems.

Pairwise comparison basics

Pairwise comparison is a basic method for collecting preferences in preference annotation for LLM alignment tasks, in which annotators are presented with two possible responses to the same query and asked to determine which is better according to given criteria. Unlike absolute scoring, in which each response must be scored on a scale, pairwise comparison reduces cognitive load and increases rating consistency. In practice, RLHF annotation provides the structured preference data required to train reward models and improve response quality.

Key concepts and terminology of pairwise comparison

- Preference data is data about human preferences obtained by choosing the best response in a pair. Each such pair forms a preference pair (A > B), which means that response A is more desirable than response B.

- Based on a large number of such pairs, a reward model is trained. This is a separate model that approximates the reward function and predicts which response a person will consider better. Ranking is the ordering of multiple responses by quality, which can be decomposed into a set of pairwise comparisons. Probabilistic models, such as the Bradley–Terry model, are often used to mathematically model preferences, which estimate the probability of choosing one response over another.

- Alignment, which is the alignment of the model's behavior with human values. An alternative alignment strategy is constitutional AI, where models are guided by predefined principles instead of relying solely on human comparison data.

- Preference distribution, which is the distribution of human preferences.

- Annotator agreement, which is the agreement between annotators.

- Bias, which is a systematic bias in the estimates.

The quality of a pairwise comparison depends on the evaluation criteria: correctness, completeness, safety, relevance, usefulness, and style.

Annotation instructions, golden samples, and consistency checks are used to minimize noise. A balance between the variety of responses and the complexity of the queries is important because obvious or similar pairs reduce the informativeness of the data.

Basic elements of a pairwise comparison

Advantages of using pairwise comparisons in surveys

Pairwise comparisons are used in surveys, user experience studies, and LLM annotation because they provide more accurate and consistent results than traditional scale scores. This method helps in model-matching tasks, particularly in Reinforcement learning from human feedback.

How paired comparisons work in practice

Paired comparisons are used in practice to elicit human preferences when determining which of two options is better according to specific criteria.

How the process works

- Query generation. A query is generated that the model should answer.

- Multiple response generation. The model creates two different answers to the same query.

- Pair presentation to the annotator. The annotator is shown two answers (A and B) without any indication of which is "original" or "better".

- Option selection. The annotator selects the answer that meets the specified criteria (accuracy, usefulness, safety, completeness, style).

- Preference fixation (A > B). The result is stored as a preference pair, reflecting which answer is better.

- Data aggregation. Many such choices are combined to form a preference dataset.

- Reward model training or direct retraining. The collected pairs are used to train or retrain the reward model.

Pairwise comparison calculation methods

Pairwise comparison methods are needed to convert annotation results into formalized data for model training or response ranking.

Win ratio approach

This approach involves directly counting how many times one response was chosen over another, which forms a win ratio. This approach allows us to determine each option's overall preference.

The win ratio is helpful for quickly estimating overall preferences without complex calculations and for initial sorting of large response sets.

Probabilistic and manual methods

More sophisticated methods consider probabilistic models that estimate the chance of choosing one response over another based on all available pairs. Such methods include models such as the Bradley–Terry or other statistical ranking models. They allow us to account for ambiguous annotation choices and provide an accurate ranking of responses.

Manual methods involve annotators evaluating and ranking responses based on their own experience or expert criteria, without complex mathematical models.

Manual methods are used for data quality control or to verify the results of automated models.

In combination with the methods above, they create a flexible and robust toolkit for pairwise comparison analysis and allow converting human preferences into structured signals for LLM training and alignment.

Different types of pairwise comparisons

Different projects require different methods of parallel evaluation. In data collection practice, various types of pairwise comparisons are used, allowing you to adapt the process to specific goals and resources.

Full and partial comparisons

Complete comparisons involve evaluating all possible pairs of answers for each query, which provides accurate ranking, but is time-consuming with a large number of options.

Partial comparisons are limited to a subset of pairs, reducing the workload on the annotator and preserving informativeness for building preference models.

Adaptive, forced, and image-based comparisons

Another classification criterion is the method used to select pairs for comparison. Adaptive comparisons are the selection of the next pair based on the results already obtained. The system selects pairs with the most significant uncertainty in the choice or those that provide the most information for training the model.

Forced comparisons are when the annotator is necessarily presented with specific pairs to ensure data accuracy and consistency.

For multimodal tasks, image-based comparison is used, in which the responses of models that contain or describe visual content are evaluated, and annotators choose the best option according to given criteria.

Comparison format characteristics

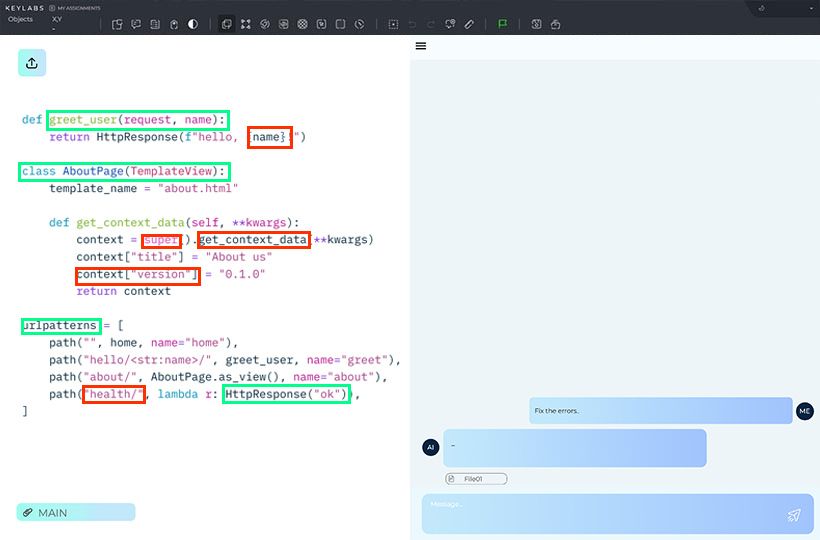

Troubleshooting

When using pairwise comparisons in data collection, various problems can arise that affect the quality and consistency of the results. It is essential to identify them in time and know the strategies for solving them to ensure reliable preference data for training models.

FAQ

What is the primary goal of using paired human feedback for AI?

The main goal is to train the model to favor responses that are most consistent with human preferences and values. This is typically achieved through RLHF annotation, while approaches like constitutional AI aim to embed ethical guidelines directly into the training process.

Why is this method often easier for humans than other rating systems?

This method is more straightforward because it is easier to choose the best of two options than to rate responses on a numerical scale or to rank many items at once.

How does this method handle very long lists of items?

This is done by using partial or adaptive comparisons to avoid exponential growth in the number of pairs.

What is a common problem when combining human raters with AI?

A common problem is systematic bias, in which human preferences or annotation errors influence the model's training.